Most event-driven rewrites I’ve seen die with 80% of the message bus built, 20% of the consumers stubbed in, and zero of the hard problems solved. I’ve been the engineer on two of those deaths. I’ve also been the engineer on one event-driven modernization that actually shipped, at Bytro, over two years. This post is the difference between the two outcomes, and it is not what the architecture diagrams say.

The comforting lie about event-driven

The talks and the blog posts make event-driven sound like a pattern: pick a message broker, define events, emit them from the write side, project them on the read side, done. Tooling is everywhere. Apache Kafka, NATS, RabbitMQ, SQS - you can stand up the infrastructure in a weekend.

This is why the rewrites get started. It’s also why they die.

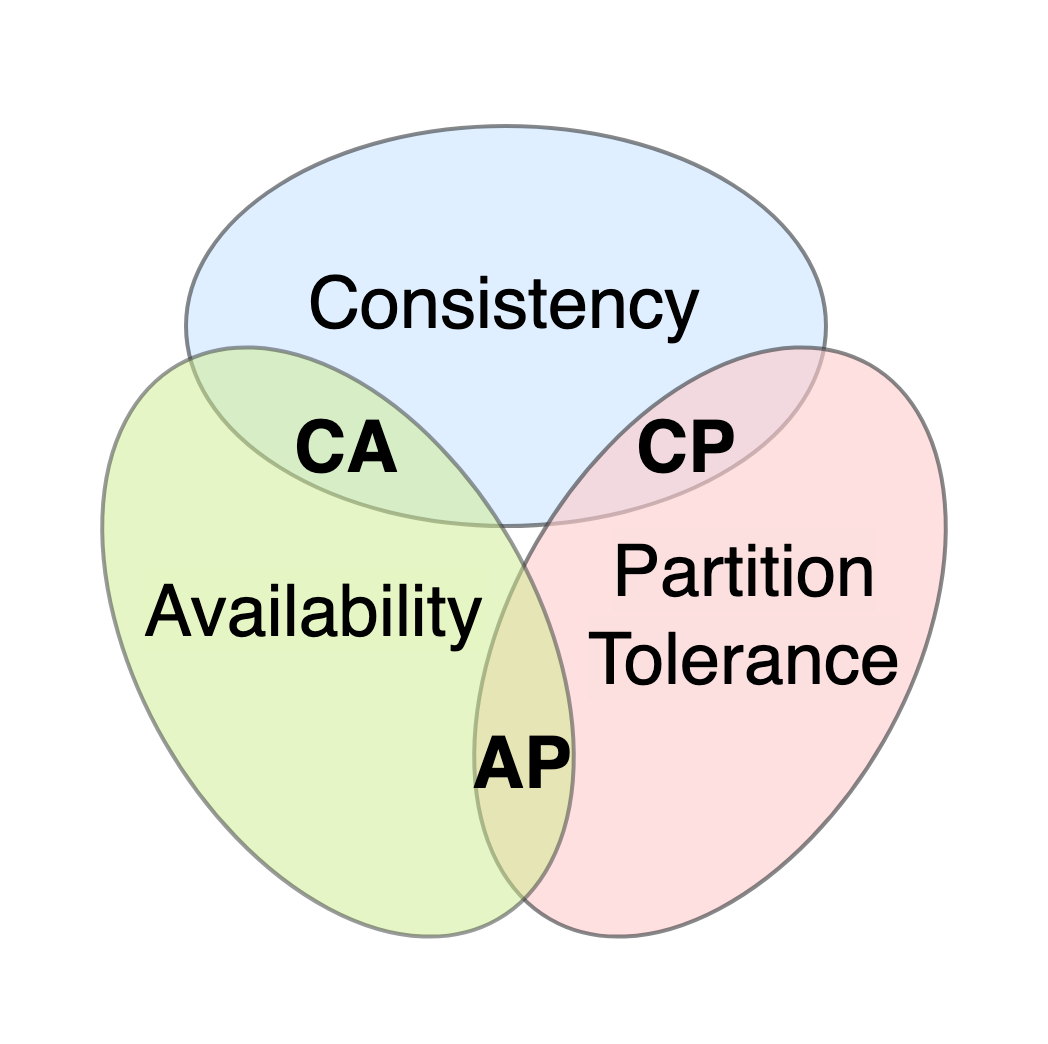

The comforting lie is that the architecture is the hard part. It isn’t. The hard part is everything the architecture forces you to make explicit. The synchronous system had a lot of implicit assumptions: request ordering, transactional boundaries, “this write is immediately visible to this read,” “if A happened, B is done.” The moment you split write from read, you also split all those assumptions - and if you don’t resolve them deliberately, your new system is a worse version of the old one that also has a message bus to operate.

What went right at Bytro

At Bytro, we modernized a real-time multiplayer game backend that had grown into a tangled legacy system over years. We went event-driven, and it shipped, and it worked. Here’s why:

1. We picked one domain, not the whole system

The temptation is to say “we’re going event-driven as an architecture principle.” We didn’t. We picked one specific pain point - match join latency - and said “this flow will be the first event-driven one. The rest stays synchronous until proven otherwise.”

That meant we could ship the first real event-driven path in months, not years. It also meant every other team’s feature work kept shipping on the old system. The modernization was a service, not a disruption.

2. We ran a shadow for 11 months

The new path was on in production from week four - at 0% of traffic, shadowing every request from the old path, producing results that weren’t served to users but were compared against the old results nightly.

The discrepancies were the spec. Every “the new system produces a different answer” was a bug in either the new system, the old system, or our understanding of the domain. We fixed roughly 200 of those over the year. Every one of them would have been an incident if we’d cut over early.

3. We kept transactional boundaries honest

Event-driven does not mean “everything is eventually consistent and users should deal with it.” Some operations still have to be atomic. If a player joins a match, three things have to happen together: the player’s state updates, the match’s roster updates, the billing hook fires. These cannot be three separate events with prayers in between.

We used transactional outbox patterns for those: the write happens inside a DB transaction, the event is also written (into an outbox table) inside the same transaction, and a separate process ships the event to the bus later. You get atomicity where you need it, async delivery where you don’t.

Every “we don’t need transactions, we’re event-driven” conversation is a six-month disaster waiting to happen. Keep your transactional boundaries explicit and small.

4. We designed events for consumers we hadn’t built yet

The hardest event-driven decision is what to put in an event. Too little, and every consumer has to join against the write-side DB to enrich the payload (which defeats the point). Too much, and you bake today’s schema into every consumer’s code and can’t evolve.

The rule we landed on: events carry the minimum payload that lets a reasonable consumer render the user-visible outcome without a round-trip. Not “all the fields the write side has.” Not “just the ID.” Somewhere in between, and the exact shape was domain-specific work that took months to get right.

Events are a public contract. Treat the event schema with the same seriousness you’d treat a REST API. Version it. Document it. Change it additively.

5. We had a rollback story at every step

Week four: feature flag off → back to 100% old path. Week twelve: flag off → back to old. Week forty: flag off → back to old. At no point was the rollback more than one config change away, and we tested that rollback monthly in a real drill.

The day we actually needed it - month eight, a corner case in event ordering - the rollback took two minutes. Because we’d drilled it.

What killed the other two rewrites

The two event-driven modernizations I watched die had most of the infrastructure in place. Brokers deployed, topics defined, serializers picked. They died on the things the infrastructure doesn’t solve:

- No shadow. They went live on a small percentage and hoped.

- Transactional boundaries papered over with “eventual.” Meaning: operations that needed to be atomic became race conditions in production.

- Events designed by committee. Every event had 40 fields because every team wanted their thing in there. The result was an unparseable firehose that nobody could subscribe to without a service of their own to preprocess it.

- No rollback. The cutover was “flip the flag.” If it didn’t work, it didn’t work.

All four of those failures are organisational and architectural, not infrastructural. Picking a different broker doesn’t fix any of them.

The 2026 footnote

Most of my current OSS work (Fulcrum) is itself event-driven in a specific way - agent runs produce events that flow through a memory / task / context bus. The same rules apply at small scale as at Bytro scale: pick one flow, shadow it, keep transactions honest, design events for consumers, drill the rollback.

The pattern is scale-invariant. The discipline is the thing that scales, not the broker.